[DAY14]Label进阶使用-Affinity and anti-affinity

把pod部署到特定node上面

k8s的特性来说,基本上部署到k8s一定是会挑资源最轻的那台node优先,所以pod会分散至各个node上面,

如果今天使用环境上面需要把pod分配到特定的node上面时,要怎麽做呢?

请继续看下去~~

先来看node上面有没有label

kubectl get nodes --show-labels

NAME STATUS ROLES AGE VERSION LABELS

k8s-playground-control-plane Ready control-plane,master 4m45s v1.21.1 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s-playground-control-plane,kubernetes.io/os=linux,node-role.kubernetes.io/control-plane=,node-role.kubernetes.io/master=,node.kubernetes.io/exclude-from-external-load-balancers=

k8s-playground-worker Ready <none> 4m16s v1.21.1 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s-playground-worker,kubernetes.io/os=linux

k8s-playground-worker2 Ready <none> 4m17s v1.21.1 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s-playground-worker2,kubernetes.io/os=linux

给work上个high-vm的label,work2给个backend-only的label

kubectl label nodes k8s-playground-worker use=high-vm

node/k8s-playground-worker labeled

kubectl label nodes k8s-playground-worker2 use=backend-only

node/k8s-playground-worker2 labeled

查看一下是否都被加上label了

kubectl get nodes --show-labels

k8s-playground-worker Ready <none> 6m10s v1.21.1 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s-playground-worker,kubernetes.io/os=linux,use=high-vm

k8s-playground-worker2 Ready <none> 6m11s v1.21.1 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s-playground-worker2,kubernetes.io/os=linux,use=backend-only

这样子我二台node都有不同的label了,一样用前面的redis来部署。

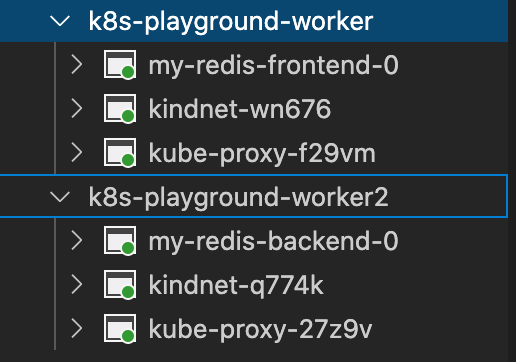

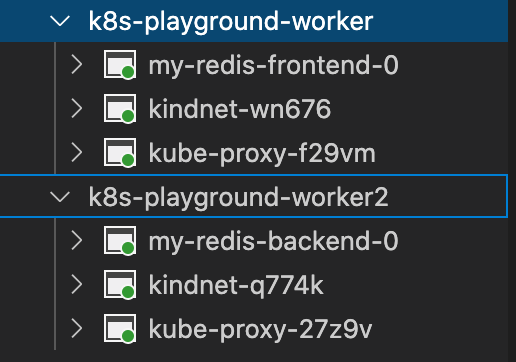

从下图可以看的出来二个pod被分别部署至不同node上面

现在我再来部署二个redis到high-vm这个label的node上面

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: my-redis-backend1

labels:

app.kubernetes.io/name: my-redis

env: dev

user: backend

spec:

serviceName: my-redis-backend1

replicas: 1

selector:

matchLabels:

app.kubernetes.io/name: my-redis

template:

metadata:

labels:

app.kubernetes.io/name: my-redis

env: dev

user: backend

spec:

containers:

- name: my-redis-backend1

image: "redis:latest"

imagePullPolicy: IfNotPresent

args: ["--appendonly", "yes", "--save", "600", "1"]

ports:

- name: redis

containerPort: 6379

protocol: TCP

volumeMounts:

- name: data

mountPath: /data

resources: {}

nodeSelector: #加这个

use: high-vm

volumeClaimTemplates:

- metadata:

name: data

spec:

accessModes: ["ReadWriteOnce"]

resources:

requests:

storage: 1Gi

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: my-redis-frontend1

labels:

app.kubernetes.io/name: my-redis

env: dev

user: frontend

spec:

serviceName: my-redis-frontend1

replicas: 1

selector:

matchLabels:

app.kubernetes.io/name: my-redis

template:

metadata:

labels:

app.kubernetes.io/name: my-redis

env: dev

user: frontend

spec:

containers:

- name: my-redis-frontend1

image: "redis:latest"

imagePullPolicy: IfNotPresent

args: ["--appendonly", "yes", "--save", "600", "1"]

ports:

- name: redis

containerPort: 6379

protocol: TCP

volumeMounts:

- name: data

mountPath: /data

resources: {}

nodeSelector: #加这个

use: high-vm

volumeClaimTemplates:

- metadata:

name: data

spec:

accessModes: ["ReadWriteOnce"]

resources:

requests:

storage: 1Gi

来确认是否都部署至use=high-vm这台node呢。

从下图可以看到新增二个redis都部署到worker这台node上面

Node Affinity and anti-affinity

亲和力(Affinity) 和 反亲和力(anti-affinity),因为正好是相反的行为,所以就单纯以Affinity来说明,

亲和力跟上面写的Node Selector有点像,但是Node Selector无法进行条件设定。

Affinity有二种条件设定模式

- requiredDuringSchedulingIgnoredDuringExecution :字首看到required,表示这种设定模式是一定要有符合的条件才能把pod部署上去,如果都没有符合条件的node时就会发生错误

- preferredDuringSchedulingIgnoredDuringExecution :这种就是

如果有符合条件就照条件部署到对应node,如果没有符合的node一样还是能部署。

一样用上面的yaml说明

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: my-redis-backend1

labels:

app.kubernetes.io/name: my-redis

env: dev

user: backend

spec:

serviceName: my-redis-backend1

replicas: 1

selector:

matchLabels:

app.kubernetes.io/name: my-redis

template:

metadata:

labels:

app.kubernetes.io/name: my-redis

env: dev

user: backend

spec:

containers:

- name: my-redis-backend1

image: "redis:latest"

imagePullPolicy: IfNotPresent

args: ["--appendonly", "yes", "--save", "600", "1"]

ports:

- name: redis

containerPort: 6379

protocol: TCP

volumeMounts:

- name: data

mountPath: /data

resources: {}

affinity: #亲和力设定

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution: # node必需符合这条件

nodeSelectorTerms:

- matchExpressions: #条件为use=backend-only

- key: use

operator: In

values:

- "backend-only"

volumeClaimTemplates:

- metadata:

name: data

spec:

accessModes: ["ReadWriteOnce"]

resources:

requests:

storage: 1Gi

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: my-redis-frontend1

labels:

app.kubernetes.io/name: my-redis

env: dev

user: frontend

spec:

serviceName: my-redis-frontend1

replicas: 1

selector:

matchLabels:

app.kubernetes.io/name: my-redis

template:

metadata:

labels:

app.kubernetes.io/name: my-redis

env: dev

user: frontend

spec:

containers:

- name: my-redis-frontend1

image: "redis:latest"

imagePullPolicy: IfNotPresent

args: ["--appendonly", "yes", "--save", "600", "1"]

ports:

- name: redis

containerPort: 6379

protocol: TCP

volumeMounts:

- name: data

mountPath: /data

resources: {}

affinity:

nodeAffinity:

preferredDuringSchedulingIgnoredDuringExecution: #如果有符合条件时就部署到对应node

- weight: 70 #权重70分

preference:

matchExpressions:

- key: use

operator: In

values:

- "high-vm"

- weight: 30 #权重30分

preference:

matchExpressions:

- key: use

operator: In

values:

- "backend-only"

volumeClaimTemplates:

- metadata:

name: data

spec:

accessModes: ["ReadWriteOnce"]

resources:

requests:

storage: 1Gi

Pod Affinity and anti-affinity

简单说pod与pod的连结(?),如果部署的情形需要某个pod跟某个pod必需是cp,不能拆散时,就可以使用pod Affinity来实作

把原本的nodeAffinity改成podAffinity

affinity:

podAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- topologyKey: kubernetes.io/hostname

labelSelector:

matchExpressions:

- key: app

operator: In

values:

- test-pod-Affinity

这样子这个pod就会去找寻它的真命天子(?)

如果需要更多资讯可以到下面的网站查询喔

官网

亲和与反亲和调度

>>: [铁人赛 Day07] 为何不该使用 index 当作 Key 值 ?——React render 更新机制解释

[iT铁人赛Day2]JAVA的设定变数

在JAVA中,可以设定一些的变数,例如:long, int, char, float,...等等 l...

Android学习笔记29

平常在登入帐号密码的时候,下面常常会有验证码,接着就试着做做看吧 首先先把所有可能出现的字元打进来 ...

初探 Vaadin on Kotlin - day03

什麽是 Vaadin-on-Kotlin? Vaadin-on-Kotlin (VoK) 是基於 V...

[Java Day08] 3.1. if else

教材网址 https://coding104.blogspot.com/2021/06/java-i...

.Net Core Web Api_笔记24_api结合EFCore资料库操作part2_产品分类资料新增_资料查询呈现(带入非同步API修饰)

接续上一篇 在Startup.cs中启用静态资源 於专案新增目录命名为wwwroot(会自动变成地球...