[神经机器翻译理论与实作] 从头建立英中文翻译器 (I)

前言

从今天起,我们将实地建立英文到中文的翻译神经网络,今天先从语料库到文本前处理开始。

翻译器建立实作

从语料库到建立资料集

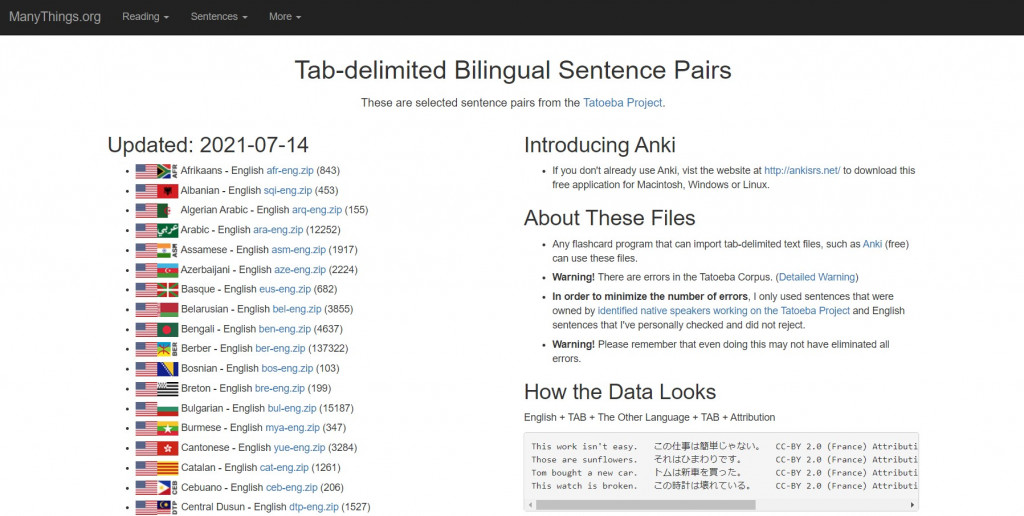

在这里我们由公开的平行语料库来源网站选定下载英文-中文语料库:Tab-delimited Bilingual Sentence Pairs

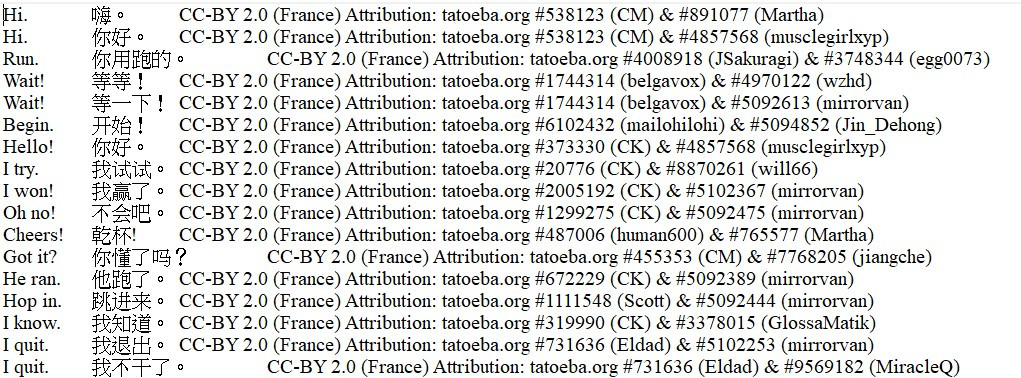

原始语料库档案如下:

首先,我们载入语料库档案,并以 list 物件来存取每一列文字:

import re

import pickle as pkl

data_path = "data/raw_parallel_corpora/eng-cn.txt"

with open(data_path, 'r', encoding = "utf-8") as f:

lines = f.read().split('\n')

我们将每列的句子依照不同语言分开,并且进行前处理(小写转换、去除多余空白、并切开将每个单词与标点符号),最後加入文句起始标示 <SOS> 与结尾标示 <EOS> 。由於书写习惯的不同,中文和英文的前处理需要分开处理(或是英文-德文等相同语系就不必分开定义两个函式):

def preprocess_cn(sentence):

"""

Lowercases a Chinese sentence and inserts a whitespace between two characters.

Surrounds the split sentence with <SOS> and <EOS>.

"""

# removes whitespaces from the beginning of a sentence and from the end of a sentence

sentence = sentence.lower().strip()

# removes redundant whitespaces among words

sentence = re.sub(r"[' ']+", " ", sentence)

sentence = sentence.strip()

# inserts a whitespace in between two words

sentence = " ".join(sentence)

# attaches starting token and ending token

sentence = "<SOS> " + sentence + " <EOS>"

return sentence

def preprocess_eng(sentence):

"""

Lowercases an English sentence and inserts a whitespace within 2 words or punctuations.

Surrounds the split sentence with <SOS> and <EOS>

"""

sentence = sentence.lower().strip()

sentence = re.sub(r"([,.!?\"'])", r" \1", sentence)

sentence = re.sub(r"\s+", " ", sentence)

sentence = re.sub(r"[^a-zA-Z,.!?\"']", ' ', sentence)

sentence = "<SOS> " + sentence + " <EOS>"

return sentence

接着将每一列文字分别存入 seq_pairs 中:

# regardless of source and target languages

seq_pairs = []

for line in lines:

# ensures that the line loaded contains Chinese and English sentences

if len(line.split('\t')) >= 3:

eng_doc, cn_doc, _ = line.split('\t')

eng_doc = preprocess_eng(eng_doc)

en_doc = preprocess_cn(tgt_doc)

seq_pairs.append([eng_doc, en_doc])

else:

continue

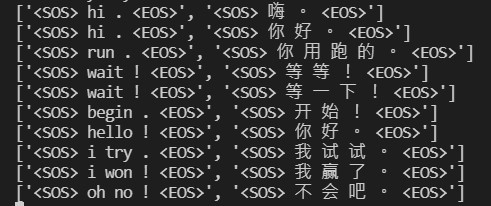

我们检视一下前十笔经过前处理的英文-中文序列:

在正式建立资料集之前,我们将前处理後的双语分别写入 pkl 档,以便後续建立资料集时直接使用,且可以依自己的喜好指定语中文或英文为来源或目标语言。

# Save list seq_pairs to file

with open("data/eng-cn.pkl", "wb") as f:

pkl.dump(seq_pairs, f)

在此次的翻译实战中,我将中文设定为输入语言(来源语言)而英文设定为输出语言(目标语言)。

假设今天在另一个程序中,我们可以从 pkl 档案读取出刚才处理好的字串,并且依照来源语言以及目标语言写入文件中:

# Retrieve pickle file of sequence pairs

with open("data/eng-cn.pkl", "rb") as f:

seq_pairs = pkl.load(f)

# text corpora (source: English, target: Chinese)

src_docs = []

tgt_docs = []

src_tokens = []

tgt_tokens = []

for pair in seq_pairs:

src_doc, tgt_doc = pair

# English sentence

src_docs.append(src_doc)

# Chinese sentence

tgt_docs.append(tgt_doc)

# tokenisation

for token in src_doc.split():

if token not in src_tokens:

src_tokens.append(token)

for token in tgt_doc.split():

if token not in tgt_tokens:

tgt_tokens.append(token)

今天的进度就到这边,明天接着继续建立训练资料集!各位小夥伴晚安!

阅读更多

- How to Develop a Neural Machine Translation System from Scratch

- Seq2Seq Learning & Neural Machine Translation

>>: 连续 30 天 玩玩看 ProtoPie - Day 20

Day-8:Rails的CRUD

CRUD系虾米? CRUD即为Create、Read、Update、Delete等四项基本资料库操作...

Day 14: Structural patterns - Decorator

目的 使用包覆(Wrapper)的方式,可以动态地给物件增添新的功能,或是重新定义既有的功能,达到扩...

Day 7 | 清单元件 - 纯文字

Adapter 一笔资讯的内容称为项目(Item),而负责将资料转换成资讯的就是Adapter,Ad...

Day32 参加职训(机器学习与资料分析工程师培训班),tf.keras

今日练习内容为建构CNN模型来分类鸟类图片,最後讲解一些架构的演进 # Load Data &...

管理是什麽?

What is management? 如果有人问你,「一个主管的工作到底是什麽?」,你会怎麽说? ...